We are living in an age where Artificial Intelligence (AI) is

coming of age due to the incredible advances in computing power.

We may need AI for space travel if we don't sort out our present

economic growth, based on exploiting natural resources without

any thought for the consequences like acid oceans and

desertification.

There

are two projects running at the moment that will push the

boundaries of machine learning. a) Elizabeth Swann, and b)

Mayflower MAS400.

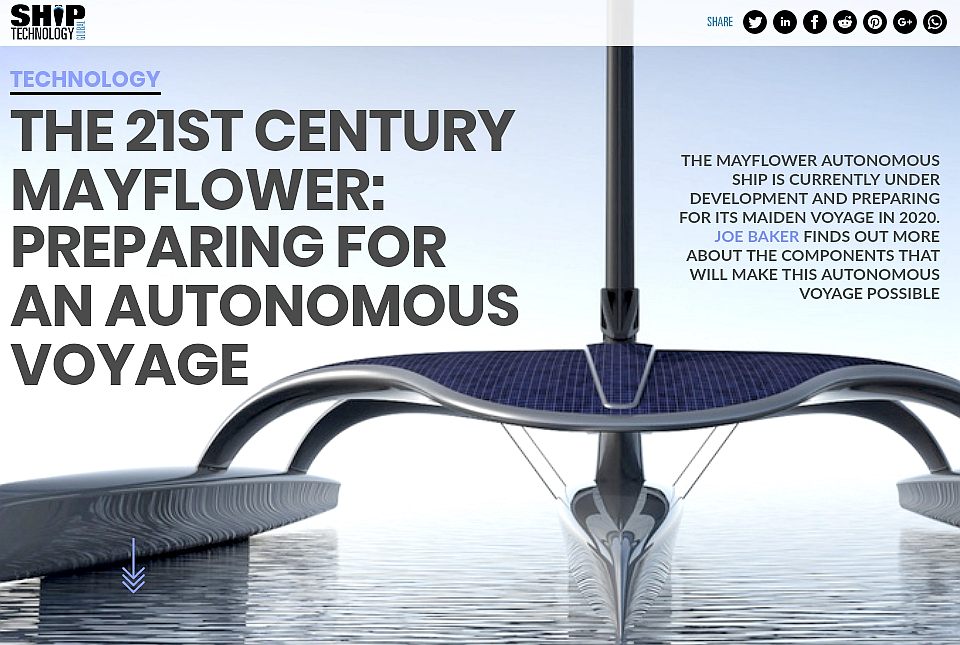

MAYFLOWER

2016 - 2021

The

MAS400

project is already underway with IBM

at the helm of an AI Captain, that the team hope (as do we) will

steer their 15 meter trimaran across

the Atlantic,

retracing the route of the Pilgrim

Fathers in 1620. This vessel is powered by a diesel engine

driving a generator and thence an electric motor via a

conventional propeller. Solar

panels are fitted to help provide the power to run the onboard

systems, not propel the ship. The Team led by Promare,

hope to eventually circumnavigate

the globe with this boat. But at the moment, it does not feature

autonomous docking

and needs to be refuelled at regular intervals. Hence, can never

be truly autonomous.

OUTLINE

DIAGRAM: The Elizabeth Swann is a 54 meter trimaran with

active outrigger hulls (or sponsons) that allow the vessel to trim for very

efficient running - the first vessel in the world with that feature. She has

solar wings that fold for storms and track the sun, and a wind turbine on a

mast that can be raised and furled in high winds, also robotically controlled.

Again, these features have not been seen before on an ocean going machine. Of

She is designed to improve on the transatlantic (solar powered) speed record

for electrically propelled ships, currently standing at 5.3 knots. The hope is

to raise the record to as near 10 knots as possible, to be able to demonstrate

that commercial ships might be zero emissions.

ELIZABETH

SWANN

By

contrast, the Elizabeth Swann project features a solar and wind powered

trimaran that is 54 meters in length, designed to push the boundaries of

zero emission marine transport from 5.3 knots, to as close to the green lane

cruising speed as possible; 10 knots. This vessel also features autonomous

navigation with Captain Nemo AI and Hal AI, but also includes unmanned

docking as a feature to allow a circumnavigation where the vessel berths

itself and casts off, if that is part of an operator's parameters.

Such

a feature will be useful in speeding up turnaround times in ports,

where solar powered ships are likely to be smaller than the 200,000 ton, 400

meter behemoths presently plying the waves on bunkerfuels.

POLITICS

You may think that many of our government leaders suffer from

Artificial Intelligence Deficiency Syndrome (AIDS) and we

couldn't agree more. Many of the very basic AI robots today have

more original thoughts than some political leaders - who appear

to have been programmed with the same basic parameters:-

-

Never

give a direct answer to a direct question.

-

Always

pass the buck.

-

Resist

change at all costs.

-

Grab

all the perks going.

-

Do

anything to score a few political brownie points - including

invading another country.

-

Promise

anything to get elected then once elected continue with own

agendas.

You

may think the above is unfair, but a simple program will suffice

for political rhetoric for any party for about ten years.

Genuine leadership requires fresh solutions to tomorrow's

problems, not quick fixes for those arising today. Generally,

politicians and policy makers only think about what happened

yesterday and use that feedback to program a course for

stability to look good to the electorate. But that scenario has

led to global warming and pandemics that control humans and cost

lives.

Stability in today's terms in unsustainable, since it

relies on oil flowing at the same rate and prices, regardless of

the environmental consequences - and profit for bankers that

earn big salaries for producing nothing at all. In other words,

riding the backs of all those coming fresh into the world. That

is of course slavery.

Any

robot, cybernetic or biological machine that might be produced

that is artificially intelligent, would very soon figure out the

above and use logical programming to try to resolve the dilemma.

Where do you think that will lead?

Maybe,

politicians will be outlawed as contrary to the natural

progression of humanity. Yet, we don't want a 'Hal' situation

developing.

MACHINES

& ROBOTS

Artificial Intelligence (AI) is the intelligence of machines and

robots and the branch of computer science that aims to create it. AI textbooks define the field as "the study and design of intelligent agents" where an intelligent agent is a system that perceives its environment and takes actions that maximize its chances of

success. John McCarthy, who coined the term in 1955, defines it as "the science and engineering of making intelligent machines."

AI research is highly technical and specialised, deeply divided into subfields that often fail to communicate with each other. Some of the division is due to social and cultural factors: subfields have grown up around particular institutions and the work of individual researchers. AI research is also divided by several technical issues. There are subfields which are focused on the solution of specific problems, on one of several possible approaches, on the use of widely differing tools and towards the accomplishment of particular applications.

The central problems of AI include such traits as reasoning, knowledge, planning, learning, communication, perception and the ability to move and manipulate objects. General intelligence (or "strong AI") is still among the field's long term goals. Currently popular approaches include statistical methods, computational intelligence and traditional symbolic AI. There are an enormous number of tools used in AI, including versions of search and mathematical optimization, logic, methods based on probability and economics, and many others.

The field was founded on the claim that a central property of humans, intelligence — the sapience of Homo sapiens — can be so precisely described that it can be simulated by a machine. This raises philosophical issues about the nature of the mind and the ethics of creating artificial beings, issues which have been addressed by myth, fiction and philosophy since antiquity. Artificial intelligence has been the subject of tremendous optimism but has also suffered stunning setbacks, Today it has become an essential part of the technology industry, providing the heavy lifting for many of the most difficult problems in computer

science.

BACKGROUND

Thinking machines and artificial beings appear in Greek myths, such as Talos of Crete, the bronze robot of Hephaestus, and Pygmalion's Galatea. Human likenesses believed to have intelligence were built in every major civilization: animated cult images were worshiped in Egypt and Greece and humanoid automatons were built by Yan Shi, Hero of Alexandria and Al-Jazari. It was also widely believed that artificial beings had been created by Jābir ibn Hayyān, Judah Loew and Paracelsus. By the 19th and 20th centuries, artificial beings had become a common feature in fiction, as in Mary Shelley's Frankenstein or Karel Čapek's R.U.R. (Rossum's Universal Robots). Pamela McCorduck argues that all of these are examples of an ancient urge, as she describes it, "to forge the gods". Stories of these creatures and their fates discuss many of the same hopes, fears and ethical concerns that are presented by artificial intelligence.

Mechanical or "formal" reasoning has been developed by philosophers and mathematicians since antiquity. The study of logic led directly to the invention of the programmable digital electronic computer, based on the work of mathematician Alan Turing and others. Turing's theory of computation suggested that a machine, by shuffling symbols as simple as "0" and "1", could simulate any conceivable (imaginable) act of mathematical deduction. This, along with concurrent discoveries in neurology, information theory and cybernetics, inspired a small group of researchers to begin to seriously consider the possibility of building an electronic brain.

The field of AI research was founded at a conference on the campus of Dartmouth College in the summer of 1956. The attendees, including John McCarthy, Marvin Minsky, Allen Newell and Herbert Simon, became the leaders of AI research for many decades. They and their students wrote programs that were, to most people, simply astonishing: Computers were solving word problems in algebra, proving logical theorems and speaking English. By the middle of the 1960s, research in the U.S. was heavily funded by the Department of Defense and laboratories had been established around the world. AI's founders were profoundly optimistic about the future of the new field: Herbert Simon predicted that "machines will be capable, within twenty years, of doing any work a man can do" and Marvin Minsky agreed, writing that "within a generation ... the problem of creating 'artificial intelligence' will substantially be solved".

They had failed to recognize the difficulty of some of the problems they faced. In 1974, in response to the criticism of Sir James Lighthill and ongoing pressure from the US Congress to fund more productive projects, both the U.S. and British governments cut off all undirected exploratory research in AI. The next few years would later be called the "AI winter" because funding for projects was hard to find.

In the early 1980s, AI research was revived by the commercial success of expert systems, a form of AI program that simulated the knowledge and analytical skills of one or more human experts. By 1985 the market for AI had reached over a billion dollars. At the same time,

Japan's fifth generation computer project inspired the U.S and British governments to restore funding for academic research in the field. However, beginning with the collapse of the Lisp Machine market in 1987, AI once again fell into disrepute, and a second, longer lasting AI winter began.

In the 1990s and early 21st century, AI achieved its greatest successes, albeit somewhat behind the scenes. Artificial intelligence is used for logistics, data mining, medical diagnosis and many other areas throughout the technology industry. The success was due to several factors: the increasing computational power of computers (see Moore's law), a greater emphasis on solving specific

sub-problems, the creation of new ties between AI and other fields working on similar problems, and a new commitment by researchers to solid mathematical methods and rigorous scientific standards.

On 11 May 1997, Deep Blue became the first computer chess-playing system to beat a reigning world chess champion, Garry Kasparov. In 2005, a Stanford robot won the

DARPA Grand Challenge by driving autonomously for 131 miles along an unrehearsed desert trail. Two years later, a team from CMU won the DARPA Urban Challenge when their vehicle autonomously navigated 55 miles in an Urban environment while adhering to traffic hazards and all traffic laws. In February 2011, in a Jeopardy! quiz show exhibition match, IBM's question answering system, Watson, defeated the two greatest Jeopardy champions, Brad Rutter and Ken Jennings, by a significant

margin. The Kinect, which provides a 3D body–motion interface for the Xbox 360, uses algorithms that emerged from lengthy AI

research as does the iPhones's Siri

POPULAR PORTRAYAL & ETHICS

Artificial Intelligence is a common topic in both science fiction and projections about the future of technology and society. The existence of an artificial intelligence that rivals human intelligence raises difficult ethical issues, and the potential power of the technology inspires both hopes and fears.

In fiction, Artificial Intelligence has appeared fulfilling many roles.

These include:

* a servant (R2D2 in Star

Wars)

* a law enforcer (K.I.T.T. "Knight Rider")

* a comrade (Lt. Commander Data in Star Trek: The Next Generation)

* a conqueror/overlord (The Matrix, Omnius)

* a dictator (With Folded Hands),

* a benevolent provider/de facto ruler (The Culture)

* a supercomputer ("The Red Queen in "Resident Evil the Movie")

* an assassin (Terminator)

* a sentient race (Battlestar Galactica/Transformers/Mass Effect)

* an extension to human abilities (Ghost in the Shell)

* the savior of the human race (R. Daneel Olivaw in Isaac Asimov's Robot series)

Mary Shelley's Frankenstein considers a key issue in the ethics of artificial intelligence: if a machine can be created that has intelligence, could it also feel? If it can feel, does it have the same rights as a human? The idea also appears in modern science fiction, including the films I Robot, Blade Runner and A.I.: Artificial Intelligence, in which humanoid machines have the ability to feel human emotions. This issue, now known as "robot rights", is currently being considered by, for example, California's Institute for the Future, although many critics believe that the discussion is premature. The subject is profoundly discussed in the 2010 documentary film Plug & Pray.

Martin Ford, author of The Lights in the Tunnel: Automation, Accelerating Technology and the Economy of the Future, and others argue that specialized artificial intelligence applications, robotics and other forms of automation will ultimately result in significant unemployment as machines begin to match and exceed the capability of workers to perform most routine and repetitive jobs. Ford predicts that many knowledge-based occupations—and in particular entry level jobs—will be increasingly susceptible to automation via expert systems, machine

learning and other AI-enhanced applications. AI-based applications may also be used to amplify the capabilities of low-wage offshore workers, making it more feasible to outsource knowledge work.

Joseph Weizenbaum wrote that AI applications can not, by definition, successfully simulate genuine human empathy and that the use of AI technology in fields such as customer service or psychotherapy was deeply misguided. Weizenbaum was also bothered that AI researchers (and some philosophers) were willing to view the human mind as nothing more than a computer program (a position now known as computationalism). To Weizenbaum these points suggest that AI research devalues human life.

Many futurists believe that artificial intelligence will ultimately transcend the limits of progress. Ray Kurzweil has used Moore's law (which describes the relentless exponential improvement in digital technology) to calculate that desktop computers will have the same processing power as human brains by the year 2029. He also predicts that by 2045 artificial intelligence will reach a point where it is able to improve itself at a rate that far exceeds anything conceivable in the past, a scenario that science fiction writer Vernor Vinge named the "singularity".

Robot designer Hans Moravec, cyberneticist Kevin Warwick and inventor Ray Kurzweil have predicted that humans and machines will merge in the future into cyborgs that are more capable and powerful than either. This idea, called transhumanism, which has roots in Aldous Huxley and Robert Ettinger, has been illustrated in fiction as well, for example in the manga Ghost in the Shell and the science-fiction series Dune. In the 1980s artist Hajime Sorayama's Sexy Robots series were painted and published in Japan depicting the actual organic human form with life-like muscular metallic skins and later "the Gynoids" book followed that was used by or influenced movie makers including George Lucas and other creatives. Sorayama never considered these organic robots to be real part of nature but always unnatural product of the human mind, a fantasy existing in the mind even when realized in actual form. Almost 20 years later, the first AI robotic pet (AIBO) came available as a companion to people.

AIBO grew out of Sony's Computer Science Laboratory (CSL). Famed engineer Dr. Toshitada Doi is credited as AIBO's original progenitor: in 1994 he had started work on robots with artificial intelligence expert Masahiro Fujita within CSL of Sony. Doi's, friend, the artist Hajime Sorayama, was enlisted to create the initial designs for the AIBO's body. Those designs are now part of the permanent collections of Museum of Modern Art and the Smithsonian Institution, with later versions of AIBO being used in studies in Carnegie Mellon University. In 2006, AIBO was added into Carnegie Mellon University's "Robot Hall of Fame".

Political scientist Charles T. Rubin believes that AI can be neither designed nor guaranteed to be friendly. He argues that "any sufficiently advanced benevolence may be indistinguishable from malevolence." Humans should not assume machines or robots would treat us favorably, because there is no a priori reason to believe that they would be sympathetic to our system of morality, which has evolved along with our particular biology (which AIs would not share).

Edward Fredkin argues that "artificial intelligence is the next stage in evolution", an idea first proposed by Samuel Butler's "Darwin among the Machines" (1863), and expanded upon by George Dyson in his book of the same name in 1998.

|

Japanese

AI robot - Youtube

|

|

Donna

robot - Youtube

|

|